Custom Keyboard Layout

|

|

My wired keyboard is basic, but has “Power” and “Sleep” and “Wake Up” where Print Screen/ScrLock/Break usually are (and those three are directly about Insert/Home/PageUp). My wireless keyboard that I use with the PC is a condensed Microsoft thingy with built in touchpad. The keyboard works, but one whisper of a nudge of the touchpad and RISC OS instantly freezes. How it works (for my simple keyboard) is that endpoint #1 of the keyboard is the usual keyboard. This outputs [00] [00] [keycode] [00] (and then 12 more NULL bytes) for every keypress.

Endpoint 2 is for the extended keys: Tested using a modified version of this program.

Yay you! BTW – watch calling yourself an “engineer” in Oregon → https://www.theregister.co.uk/2017/04/29/engineer_fined_for_talking_about_math/ Oh, and your fiction page has a load of "U"s at the end, which NetSurf renders at the top of the page… and Firefox doesn’t render at all because it’s beyond the HTML closing tag so ought to be ignored. ;-)

Whatever works and has been thoroughly tested?

Oh my god, it has a Like key! |

|

|

Useful information, thanks Rick! I think I’m currently called a “Firmware Developer” and not sure what the new role is down as…I know I went to the interview for a “Senior Information Systems Engineer” but they then offered me a less senior role (not enough desktop experience apparently…although the interviewers had never heard of RISC OS…so meh…) so its possibly a plain old “Information Systems Engineer” or maybe some other collection of vaguely related words that the HR department conjure up. I’m sure Engineer will feature in there somewhere…its that kind of place… No plans to visit Oregon but if I do I’ll make sure to wear a cunning disguise.

Thanks for (a) visiting and (b) bug fixing my site! I don’t have a counter on there but I reckon you must be visitor 9 and the other 8 were me on different computers… Man thats weird! Could it be that my site got zapped with the recent outage at Orpheus…? I notice my logo is looking very strange on NetSurf as well. Also feel I should point out that the link I had put in there (and forgotten about) doesn’t actually work! It points to a RISC OS server which I unplugged when I couldn’t get WebJames to play nicely with subdomains (and haven’t had time to fix yet). Still its on the “fiction” page so I guess its OK that the site is entirely fictitious! |

|

|

The licence itself is here. |

|

|

Stefano suggested

Meh.

Amtelco KB163 |

|

|

Meh.

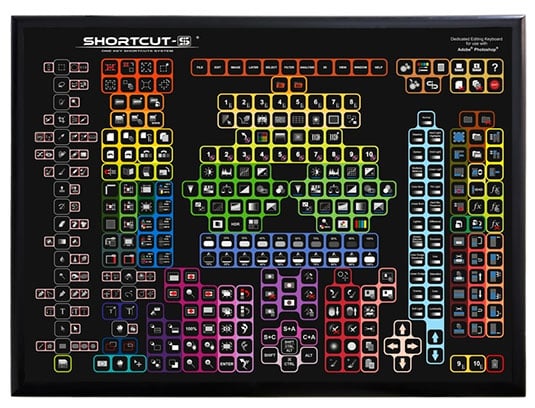

For your industrial grade Photoshop sessions. |

|

|

For more Meh, how about this horror show:

At least this one never reached production. As far as I know… |

|

|

Haha! I actually really quite like it! Although I can’t help feeling there is space for a few more keys on the top left… I often wondered how useful all the extra keys F(x) would be but if they were mapped as symbols like the above then it might be handy. Another stalwart is readily available and it comes with extra loud clicky keys: http://www.pckeyboard.com/page/product/40L5A I had one of their “spacesaver” keyboards for a bit but it was just too loud… |

|

|

Being a touch typist, I like a keyboard I can touch-type all the symbols on – which you can do on a normal keyboard with a small range of shift keys (Ctrl, Alt, Shift is actually enough) and the accents and symbols mapped onto the alphanumeric keys. Then type accent first followed by character for accented characters, just like one always did on manual typewriters. The idea of hunting for the combination and pecking at the keyboard really doesn’t appeal. |

|

|

I too am a touch typist but never got the hang of getting the symbols right! I’ve always been switching between computers/OSs (So far it is: Amiga Workbench, Windows, Linux, old Mac OS, BSD, Solaris, Haiku, RISC OS and new macos) and forget where they are usually. Also when I was doing all my stuff in XML I generally had to type things like &, < or > But on reflection the only symbols I use regularly now are the m-dash and and to a lesser extent the degree sign. Its a shame that the m-dash comes out as a tilde when converting between Acorn Latin1 and other encodings because it makes my writing look a bit weird sometimes! |

|

|

I don’t use symbols half as much as I used to either – I used to do names and addresses in every latin-alphabet language under the sun (apart from Vietnamese), and a lot of Greek letters in maths and science material. Not to mention typing text in Hindi as well as English, and occasionally in French, German, Russian or Greek. But that was all on DIY keyboard drivers on Acorns. I hate having to use hundreds of four-digit codes that I can’t possibly memorize instead of my fingers simply knowing where each accent is and typing accent-then-character. These days for that sort of stuff I’m mostly typing HTML, and it’s a bit annoying typing |

|

|

And suddenly I’m wanting to type in Hindi again…any progress on a shiny new IKHG anyone? |

|

|

<Ahem> Devanagari? UTF-8 or some 8-bit font? Is there an echo in here? |

|

|

:-) Ah! :-) Devanagari – if UTF-8 is a possibility, I shall be very happy. I’ve got my old 8-bit fonts that combine in the old Devanagari typewriter way so if I could make a keyboard handler that worked with them (as I had in RiscPC days, using IHKG) that would be fine and let me touch type the old way, or with a clever enough keyboard handler I could perhaps touch type the old way and still produce UTF-8; if not I could certainly write an app that would translate a text file in the old 8-bit font into UTF-8. |

|

|

OK. Some awkward questions: Are your old fonts in ISCII encoding (so OM is character 162) or bound direct to a keyboard layout (so OM would be some ASCII value)? Which keyboard layout are you hoping for – InScript, a phonetic IME, the old typewriter layout or something else? ISTM that rather than creating a full InScript driver in isolation, it would be better to delegate the Latin side (so to speak) to the existing handler… though that wouldn’t allow Alt keypresses to produce the Devanagari character in isolation (but is that particularly useful?). The nukta (and danda) handling would require a <Delete><No I actually meant this> in UTF-8 mode. In 8-bit mode you’d just get what you typed… or would you not want the keyboard handler to do that composition? At best only you will know what I’m talking about. At worst I’m talking to myself again. Nurse! |

|

|

(1) bound direct to a keyboard layout – but easily shifted around. However, they’re a mixture of half-characters, full characters, and diacritical marks, following what old-fashioned Hindi typewriters (not the more modern ones) used to produce for each keystroke. (2) Old typewriter layout would be ideal, because I can touch-type on that. (3) You’re partly talking to yourself, but I partially understand. I’d love the keyboard handler to take my old-typewriter-typing and generate UTF-8 on the fly, but I’d be perfectly happy if the keyboard handler produced the ASCII values that would display directly using my old 8-bit (rather randomly arranged) font; I could easily write a little app that translated a file containing the ASCII values into UTF-8 to send to people with proper fonts. |

|

|

I’m sorry I didn’t get back sooner, I’ve been down a rabbit-hole.

This seems rather unfortunate, as it seems you want the key G to generate the code M for example, which just happens to be the glyph in your random font arrangement that you want on your G key, is that right? (I use G & M just as an example) Perhaps I’ve misunderstood. I would have thought that if you were putting Devanagari glyphs on codepoints in the 33-127 range in an 8bit font, you’d put them on the ASCII codepoints that correspond with the keycaps on your real (or imagined) keyboard. So for the equivalent of an InScript keyboard layout you’d put ज on p and झ on P, द on o and ध on O, etc. If you were using an ISCII alphabet, you’d put ज on &BA and झ on &BB, द on &C4 and ध on &C5, etc and then arrange for the keyboard handler to emit &BA/&BB for p/P and &C4/&C5 for o/O etc. And if you had a Unicode Devanagari font, you’d get the handler to emit &91C/&91D and &926/&927 to the UTF-8 alphabet. I’m confused about what you want. |

|

|

Only slightly I think. I put the Devanagari glyphs on codepoints in the 128-255 range, not in the 32-127 range. The font I used is here: http://clive.semmens.org.uk/024_ROUGOL/PN.html and the keyboard here: http://clive.semmens.org.uk/024_ROUGOL/PO.html – I had a little app on the iconbar that you could click on a menu to select which keyboard layout you wanted, options being English, Hindi, Russian or Greek. The English layout also covered most Latin alphabet languages – Vietnamese being the only one not covered. Composing multiple diacritical marks on letters is a nightmare – one I tackled for Devanagari because I really needed that. Really what I’d like is to be able to have that little app on the Pi – but IKHG doesn’t work on recent versions of RISCOS. Translating my multicharacter composed diacritic/characters into the UTF-8 single precomposed characters is a piece of cake – perhaps not as one types, but certainly after the typing with a completed file. |

|

|

Aha, good! I thought at least one of us was mad. <hours go by> I’ve spent quite a while trying to work out what all your glyphs are, and I’ve come to the conclusion that you’re using (in some cases) partial letters. So as well as MA, you seem to have a glyph that would correspond to just the M, which would then need AA next to it. Is that right? Obviously this can’t be represented in Unicode without using Private Use characters (which is counterproductive) unless the keyboard handler composed your consonant-vowel combinations into actual letters, much as phonetic Hiragana works. Other glyphs I just don’t recognise at all – like whatever you have on the 3 key, which looks like two I letters. Are these Vedic extensions, a peculiarity of this typeface or something else? This is quite frustrating. An InScript/ISCII/Unicode handler would be trivial! Presumably you did have a working handler, is it merely not 32bitted? |

|

|

Yup, exactly right. I’ve simply duplicated what old-fashioned typewriters used to do – with the same partial and full letters on the same keys as they did, which meant I could continue to touch-type the old way. Although the reason you have the half MA is that when it combines with the following consonant with no intervening vowel, you don’t need the AA next to it. No – it can’t be represented in Unicode, but I can easily write an app that takes a file typed that way and converts it to the corresponding Unicode characters. Depending on what (if anything) replaces IKHG, I could probably write a keyboard handler that does it on the fly, but that would be harder, because unlike accent-character typing in Latin alphabet languages, the diacritics are often typed after the letter, replacing the implied A. The character on the 3 key is a double palatal D – as normally typeset in well-set Hindi. Likewise the one on the zero key is a dental D-Dh combination, and the ones on the key to the left of the 1 key are a double dental T and a dental T-Th combination. I’ve not actually taken a good look at Unicode’s attempt to handle Devanagari. If it doesn’t use partial letters, how does it cope with them? Does it use soft halants, and expect the font rendering to handle the combinations? Or does it just assume that hard halants will do, and that the font rendering will render them all combined?

Indeed. I wrote my handler(s) using IKHG, and IKHG hasn’t (as far as I know) been 32bitted. Writing an IKHG is beyond my capabilities – or at best, at the top of that very steep learning curve, and requiring a C DDE. A 32-bitted IKHG would be a lot more generally useful than a 32-bitted version of my particular Hindi handler, even if the latter were possible without the former. I could then make proper Greek and Russian handlers that allow standard Greek and Russian keyboard layouts instead of the phonetic-QWERTY layouts beloved of 2nd language Greek and Russian typists…and Armenian too, although I don’t type Armenian and none of my Armenian friends are RISCOS users and probably learned to type on computers (and will therefore have learnt on the phonetic-QWERTY layout). |

|

|

Thanks Clive, I just got a message from my Indian friend telling me the same thing. I don’t know nearly enough about Hindi typesetting.

There are both soft and hard halants, but yes, in the modern world the characters are represented in Unicode, not the glyphs. It is up to the font to convert runs of Unicode into the appropriate glyphs in the correct typographic order. And yes, for Devanagari that’s quite complicated. It is syllables that are encoded (multiple consonants followed by an inherent vowel, like Hiragana) in phonetic order, even though setting the word may require glyphs to be in a different order. Inherent vowels can be overridden by dependent vowel codes. The half-forms you’re using (minus the A stem) are implied in Unicode by a sequence, rather than having a single codepoint. Phonetically the vowel can be killed with a virama (which is a combining accent). Typographically though it is the consonant/dependent-vowel sequence that would cause the font to either combine partial glyphs or use a pre-composed substitute glyph. Multiple consonants (with dead vowels) can be depicted in different ways depending on the font – ideally with a pre-composed glyph (such as your double-D above), or with half-forms as you have, or with the full forms with a virama attached. Those letters that change shape depending on context, such as RA, are always encoded as a single Unicode, and again it’s the font that finds the right glyph. This means a good Unicode Devanagari font will have many hundreds of glyphs, much like latin fonts have ligatures such as fi and fl. For sanity, one should only use the simplest Unicode representation. This is called normalisation. For example, the latin letter A can have grave or acute accents: À Á. These could be represented as an A followed by a combining accent, i.e. 0041 0300 and 0041 0301, but there are already pre-composed characters encoded: 00C0 and 00C1. However, letter A can have both grave and acute accent attached, and there isn’t a pre-composed Unicode for that so it has to be constructed. This could be done four different ways: 0041 0300 0301, 0041 0301 0300, 00C0 0301 and 00C1 0300. Only the first of these is normalised. What this all means is that it isn’t totally straightforward to convert between your typewriter encoding and Unicode, and even if one contrived Unicode sequences to represent some of your partial characters, the resulting Unicode would be highly non-normalised and probably nonsensical. The handler would have to be very clever indeed to try and avoid that. For example, your partial MA might be encoded as 092E 094D; if you pressed I it would issue the dependent 093F, but if you pressed A it would have to issue 007F to delete the halant. And anyway, you’d be expecting to press the I first (f on your keyboard layout) because it is set to the left of the consonant. Crikey, that’s enough for one message. Aside: Although Devanagari is complicated it is at least well defined, whereas it was recently discovered that there were 37 different ways of encoding the word “saeub” in Khmer. Chrome still displays three of them identically: ស៊ើប, ស៊េីប and សេុីប (encoded TRA TRIISAP OE BA, TRA TRIISAP E II BA, and TRA E U II BA). It’s the first of those that is normalised. Ironically, this is the word “intelligence”. |

|

|

The touch typist in me cringes – one types the accent first on a typewriter, and the carriage doesn’t advance. More modern (ie 1970s :-) electric typewriters don’t actually type the accent until they know whether the following character is upper or lower case; old manual typewriters may have had upper case accents or more likely simply omitted them (mine did – if you typed the accent with an upper case character you’d end up with them one on top of the other). I know plenty about Hindi typesetting – but not enough about Unicode! Does Unicode really have all the possible precomposed glyphs of Hindi? That’s not quite as bad as Chinese, but still pretty impressive. I should take a look.

No! I don’t want to represent my partial characters by Unicode sequences at all. The partial character is always followed by another consonant (and very likely preceded and/or followed by one or more diacritics). I want to take that sequence, which together form a syllable, and translate the whole lot into the one pre-composed Unicode glyph. Easily done if Unicode really has all the possible syllables.

Now Khmer is a completely closed book to me. I might have a go at Armenian and/or Georgian, but I think they’re pretty straightforward… |

|

|

I wonder if this is why accents seem to be optional on capital letters in French… not because they are, but rather a century of typewriters incapable of putting an accent on a capital…? |

|

|

I’m pretty sure that’s exactly why, yes. |

|

|

And this is exactly how dead accents in keyboard handlers work. Don’t confuse the keys you press with the Unicodes emitted, they can be quite different. So (on my handler) Alt-Shift-6 is the circumflex “dead accent”, which produces no output but puts the handler into ‘pending Alt’ mode. Then when I press a letter it finds an appropriate code in the current alphabet which, if it’s UTF-8, could produce a base letter followed by a combining accent. (And yes, the Unicode committee’s decision to put modifiers like this after the base character was a silly mistake, but it’s Too Late Now).

No, it definitely doesn’t. That’s what I was hoping to get across. Unicode can encode them, but not as single codepoints. It is up to the font to do the composition and substitution. This is one of the ways in which the FontManager is completely outdated.

This is a crucial point – what you expect to see on screen as you type. The so-called ‘dead accent’ keys don’t produce anything on screen, that’s why they’re ‘dead’. However, I imagine you’re expecting to see a partial letter (a half-form). Then if you’d typed the half-form of R followed by A we’d somehow have to end up with the single Unicode codepoint for RA. Let’s consider Hiragana, which is similarly phonetic and which over 90% of Japanese typists use by typing phonetically on a Latin keyboard – type H and a latin H appears; type I and the H disappears and a ひ (HI) appears; type R and a latin R appears; type A and the R is replaced by ら, and so on. That’s easy, but this is much more complicated, because to make the half-form of RA (0930) appear in Unicode you’d need a virana (halant) – 0930 093C (a decent font would render that as the ‘eyelash’ form). Now if you press I we’d need to end up with 0930 093F, so the handler would have to output 007F 093F, but if you’d typed A then it would only need to delete the halant. If you hadn’t just typed half-R, then I and A would probably be outputting the independent vowel codes 0905 and 0907.

Nope, that’s what the Unicode font does, the Unicodes merely encode the reading, not the setting, IYSWIM.

It’s due to the lack of room for the accent. Incidentally, since Unicode allows multiple accents to be attached to almost any letter, the font has to cope with moving accents to make room, which is how you can end up with monstrosities like t̀̀̀̀̀̀̀̀h̪̪̪̪̪̪̪ḯ̈́̈́̈́̈́̈́s̝̝̝̝̝̝̝. |

|

|

ANYWAY, the crucial point is that it’s obviously straightforward to produce an 8bit handler for your esoteric encoding, but Unicode is harder (because of the temporary halants) and pointless (because FontManager can’t display Devanagari Unicode adaquately). |